Privacy Risks

The Common Sense Privacy Program helps parents, teachers, schools, and districts make sense of the privacy risks they may face with our Privacy Ratings that flag areas of concern. A comprehensive privacy risk assessment can identify these risks and determine which personal information companies are collecting, sharing, and using to minimize potential harm to children and students. Children require specific protection of their personal information, because they may be less aware of the risks, consequences, safeguards, and concerns and their rights in the processing of their personal information. Universal privacy protections should apply to the use of personal information of children for the purposes of marketing or creating personality or user profiles and the collection of personal data from children when using services offered directly to a child.

The Privacy Program provides an evaluation process that assesses what companies' policies say about their privacy and security practices. Our evaluation results, including the easy-to-understand rating icons described above, indicate which companies are transparent about what they do and don't do but also indicate whether a company's privacy practices and protections meet industry best practices.

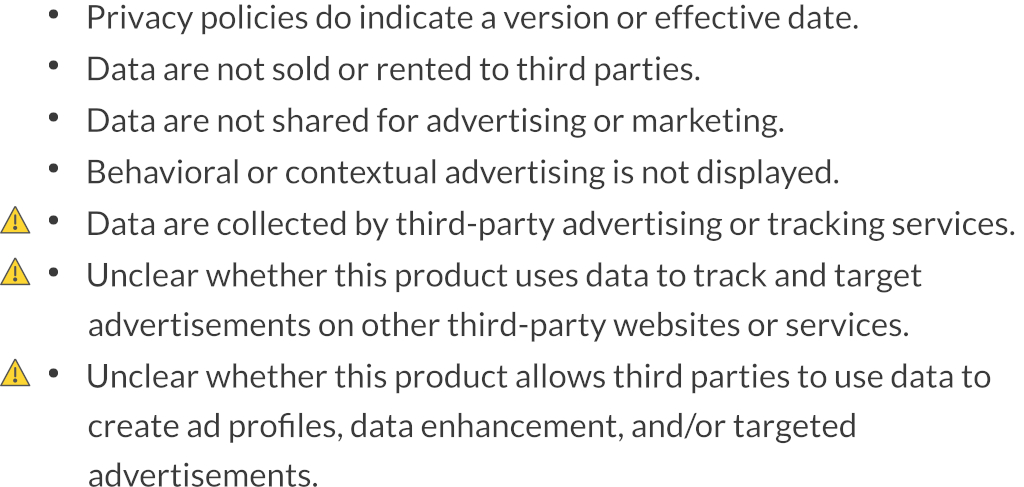

Beyond the rating icons, Common Sense privacy evaluations display rating criteria for each product and indicate when a criteria is found to be a worse or unclear practice with a yellow alert icon. These yellow alert icons, illustrated below, give a clear indicator of which factors deserve more scrutiny. Looking at this list, the potential user can see which of the vendor's practices caused us some concern. We realize that educators' time is short and we strive to communicate the results of our privacy evaluations in a scalable way. This level of information is more detailed than the ratings and allows those who are curious about why we gave a product a particular rating to see which factors deserved special notice and are therefore marked with a yellow alert icon.

The following rating criteria describe some of the most important privacy risks and resulting harms that can occur with technology products intended to be used by children and students. These risks also affect their parents and educators, both directly as users themselves and indirectly in that their children and students are harmed by privacy risks.

Fail Criteria

The following single criteria is used in the determination of whether or not a product receives a Fail rating for lack of a privacy policy to protect children's and students' personal information.

Privacy Policy: The privacy policy for the specific product (vs. a privacy policy that just covers the company website) must be made publicly available. Without transparency into the privacy practices of a product, there are no expectations on the part of the child, student, parent, or teacher of how that company will collect, use, or disclose collected personal information, which could cause unintended harm.

Warning Criteria

The following six criteria are used to determine whether a product receives a Warning rating for unclear or worse practices.

Data Sold: A child or student's personal information should not be sold or rented to third parties. If a child or student's personal information is sold to third parties, then there is an increased risk that the child or student's personal information could be used in ways that were not intended at the time at which that child or student provided their personal information to the company, resulting in unintended harm.

Third-Party Marketing: A child or student's personal information should not be shared with third parties for advertising or marketing purposes. An application or service that requires a child or student to be contacted by third-party companies for their own advertising or marketing purposes increases the risk of exposure to inappropriate advertising and influences that exploit children's vulnerability. Third parties who try to influence a child's or student's purchasing behavior for other goods and services may cause unintended harm.

Behavioral Advertising: Behavioral or contextual advertising based on a child or student's personal information should not be displayed in the product or elsewhere on the internet. A child or student's personal information provided to an application or service should not be used to exploit that child or student's specific knowledge, traits, and learned behaviors to influence their desire to purchase goods and services.

Third-Party Tracking: The vendor should not permit third-party advertising services or tracking technologies to collect any information from a user of the application or service. A child or student's personal and usage information provided to an application or service should not be used by a third party to persistently track that child or student's actions on the application or service to influence what content they see in the product and elsewhere online. Third-party tracking can influence a child or student's decision-making processes, which may cause unintended harm.

Tracking Users: A child or student's personal information should not be tracked and used to target them with advertisements on other third-party websites or services. A child or student's personal information provided to an application or service should not be used by a third party to persistently track that child or student's actions over time and across the internet on other devices and services.

Data Profile: A company should not allow third parties to use a child or student's data to create a profile, engage in data enhancement or social advertising, or target advertising. Automated decision-making, including the creation of data profiles for tracking or advertising purposes, can lead to an increased risk of harmful outcomes that may disproportionately and significantly affect children or students.

Pass Details

If a product does not flag any of our criteria for the Fail or Warning ratings, it has met our minimum safeguards and is rated Pass. Since the Pass rating does not have explicit criteria of its own because privacy concerns and needs vary widely based on the type of application or service and whether the app is used at home or in the classroom, we have highlighted the following best practices for additional consideration: limiting the collection of personal information, not making information publicly visible, safe interactions, data breach notification, and parental consent.

Children Intended: A vendor should disclose whether children are intended to use the application or service. If policies are not clear about who the intended users of a product are, then there is an increased risk that a child's personal information may be used in ways that were not intended at the time at which that child provided their personal information, resulting in unintended harm.

Collection Limitation: A company should limit its collection of personal information from children and students to only what is necessary in relation to the purposes of providing the application or service. If a company does not limit its collection of personal information, then there is an increased risk that the child or student's personal information could be used in ways that were not intended, resulting in unintended harm.

Visible Data: A company should not enable a child to make personal information publicly available. If a company does not limit children from making their personal information publicly available, there is an increased risk that the child or student's personal information could be used by bad actors, resulting in social, emotional, or physical harm.

Safe Interactions: If a company provides social interaction features, those interactions should be limited to trusted friends, classmates, peer groups, or parents and educators. If a company does not limit children's interactions with unknown individuals, there is an increased risk that the child or student's personal information could be used by bad actors, resulting in social, emotional, or physical harm.

Data Breach: In the event of a data breach, a company should provide notice to users that their unencrypted personal information could have been accessed by unauthorized individuals. If notice is not provided, then there is an increased risk of harm due to the likelihood of personal information that was breached being used for successful targeted or phishing attempts to steal additional account credentials and information, resulting in potential social, emotional, or physical harm.

Parental Consent: A company should obtain verifiable parental consent before the collection, use, or disclosure of personal information from children under 13 years of age. If parental consent is not obtained, then there is an increased risk that the child or student's personal information could be inadvertently used for prohibited practices, resulting in unintended harm.